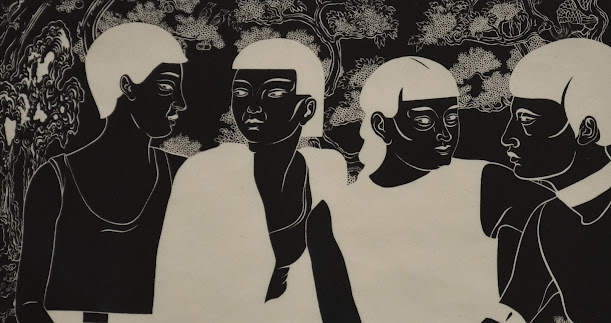

A.I. : ''' '' BLACK

STUDENTS BLURR '' '''

THE PRIZE OF HONOURS : THE WORLD STUDENTS SOCIETY NEED NOT be world disturbing or world disrupting, to be World Changing. I deeply and most touchingly thank all Leaders, Grandparents, Parents, Students, and Teachers.

Incontinuation, I have the singular honour to confer and bestow all laurels, awards, peans, acclaims, applauses, that I received - to the outstanding devotion, hardwork, creativity and talent of the Global Founder Framers. Forward-looking for generations and generations to be.

!WOW! is now a global name. In the time's ahead the Students of the world will help the world prepare for A.I. and a new world. We have a long-term orientation. Welcome to The World Students Society - for every subject in the world.

BLACK STUDENTS - ARTISTS SEE CLEAR BIAS IN A.I. Almost all Tech companies agree that algorithms can perpetuate discrimination.

TIME ENOUGH to ask some tough questions about the relationship between A.I. and race. Many Black artists are finding evidence of racial bias in artificial intelligence, both in the large data sets that teach machines how to generate images and in the underlying programs that run the algorithms.

In some cases, A.I. technologies seem to ignore or distort artists' text prompts, affecting how Black people are depicted, and in others, they seem to stereotype or censor Black history and culture.

Discussions of racial bias within artificial intelligence has surged in recent years, with studies showing that facial recognition technologies and digital assistants have trouble identifying the images and speech patterns of nonwhite people. The studies raised broader questions of fairness and bias.

The artist Stephanie Dinkins has long been a pioneer in combining art and technology in her Brooklyn-based practice. In May, she was awarded $100,000 by the Guggenheim Museum for her groundbreaking innovations, including an ongoing series of interviews with Bina48, a humanoid robot.

FOR the past seven years, she has experimented with A.I.'s ability to realistically depict Black women, smiling and crying, using a variety of word prompts. The first results were lackluster if not alarming ; Her algorithm produced a pink-shaded humanoid shrouded a black cloak.

''I EXPECTED something with a little more semblance of Black womanhood,'' she said.And although the technology has improved since her first experiments, Dinkins found herself using run-around terms in the text prompts to help the A.I. image generators achieve her desired image, ''to give the machine a chance to give me what I wanted.''

But whether she uses the term ''African American woman'' or ''Black woman,'' machine distortions that mangle facial features and hair textures occur at high rates.

'' Improvements obscure some of the deeper questions we should be asking about discrimination,'' Dinkins said. The artist, who is Black, added : '' The biases are embedded deep in these systems, so it becomes ingrained and automatic.

If I am working within a system that uses algorithmic ecosystems, and then I want that system to know who Black people are in nuanced ways, so that we can feel better supported.''

Major companies behind A.I. image generators - including OpenAI, Stability AI and Midjourney - have pledged to improve their tools.

'' Bias is an important, industrywide problem,'' Alex Beck,a spokeswoman for OpenAI said in an email interview, adding that the company is continuously trying ''to improve performance, reduce bias and mitigate harmful outputs.''

She declined to say how many employees were working on racial bias, or how much the company had allocated toward the problem.

'' Black people are accustomed to being unseen,'' the Senegalese artist Linda Dounia Rebeiz wrote in an introduction to her exhibition '' In / Visible,'' for Feral File, an NFT marketplace. '' When we are seen, we are accustomed to being misrepresented.''

To prove her point during an interview with a reporter, Rebeiz asked OenAI's image generator DALL-E2, to imagine buildings in her hometown, Dakar. The algorithm produced arid desert and landscapes and ruined buildings that Rebeiz said were nothing like the coastal homes in the Senegalese capital.

'' It's demoralizing, '' Rebeiz said. '' The algorithm skews toward a cultural image of Africa that the West has created. It defaults to the worst stereotypes that already exist on the Internet.''

LAST YEAR, OpenAI said it was establishing new techniques to diversify the images produced by DALL-E2, so that the tool ''generates images of people that more accurately reflect the diversity of the world's population.''

An artist featured in Rebeiz's exhibition, Minne Atairu, is a Ph.D. candidate at Columbia University's Teachers College who planned to use image generators with young students of color in the South Bronx area New York City.

But she now worries '' that might cause students to generate offensive images,'' Atairu said.

Included in the Feral File exhibition are images from her ''Blonde Braids Studies,'' which explore the limitations of Midjourney's algorithm to produce images of Black women with natural blond hair.

When the artist asked for an image of Black identical twins with blond hair, the program instead produced a sibling with lighter skin.

The Honour and Serving of the Latest Global Operational Research on Artificial Intelligence, Bias and Future continues. The World Students Society thanks author Zachary Small.

With most respectful dedication to the Scientists, Researchers, Mankind, Law Enforcement Agencies, and then Students, Professors and Teachers of the world.

See You all prepare for Great Global Elections on The World Students Society - the exclusive ownership of every student in the world : wssciw.blogspot.com and Twitter - !E-WOW! - The Ecosystem 2011 :

Good Night and God Bless

SAM Daily Times - the Voice of the Voiceless

.png)

0 comments:

Post a Comment

Grace A Comment!